Associate Professor Sue Beckingham, Sheffield Hallam University and Professor Peter Hartley, Edge Hill University share insights from their recent work in search of responsible Generative AI.

Please complete the following sentence from your own perspective before you read on:

“2026 is shaping up to be the most XXXX year yet in the chequered history of Generative AI.”

Depending on which tech guru you follow/believe, you may have replaced XXXX with a variety of sentiments from ‘exciting’ and ‘transformative’ to ‘catastrophic’ or ‘dangerous’.

On the one hand, we have the confident predictions from leading tech ‘entrepreneurs’ like Sam Altman CEO of OpenAI, the providers of ChatGPT, that the ultimate goal of the AI industry is just around the corner – Artificial General Intelligence (AGI) – where AI can complete the widest range of tasks better than the best humans. That will of course mean the end for many if not most jobs and careers. Fortunately, Elon Musk has assured us that mass prosperity is the logical outcome (as has Altman). So, we no longer need to save for retirement.

On the other hand, we have a selection of experienced and respected tech-industry pioneers like Geoffrey Hinton and Stuart Russell warning us that this technology could easily become dangerous and out of control. The latest controversy surrounding Grok and its facility to produce demeaning deepfakes do not make us feel confident that our technical leaders can be relied upon to behave responsibly and ethically.

Given this weight of controversy, it is concerning that one elephant in the room is not being considered more seriously or more widely – the costs of using this technology.

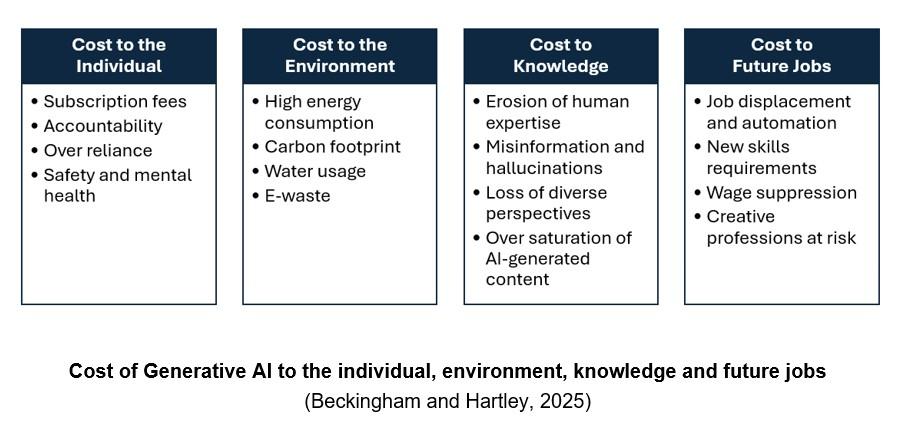

We talk of ‘costs’ to highlight that there are a whole range associated with this technology, not just economic, and our current attempt to map these is the diagram below.

Acknowledging the Costs of Generative AI

Critical AI literacy involves understanding how to use GenAI responsibly and ethically – knowing when and when not to use it, and the reasons why. The costs of GenAI are a critical component of this literacy. For example:

Cost to the Individual

Fees: continually changing subscription tiers for GenAI tools range from free for the basic version through to different levels of paid upgrades. Premium models e.g. enterprise AI assistants are costly, limiting access to businesses or high-income users.

Accountability: A lack of clear guidelines on what can/cannot be shared with GenAI raises concerns and implications of infringing copyright.

Over-reliance: Outcomes for learning depend on how GenAI apps are used. If users rely on AI-generated content too heavily or exclusively, they can make poor decisions, with a detrimental effect on skills.

Safety and mental health: Increased use of personal assistants providing ‘personal advice’ for socioemotional purposes can lead to increased social isolation

Cost to the Environment

Energy consumption: The infrastructure used for training and LLMs requires millions of GPU hours to train, and increases substantially for image generation. The growth of data centres also creates concerns for energy supply.

Emissions and carbon footprint: Developing the technology creates emissions through the mining, manufacturing, transport and recycling processes.

Water consumption: Water needed for cooling in the data centres equates to millions of gallons per day.

e-Waste: This includes toxic materials (e.g. lead, barium, arsenic and chromium) in components within ever-increasing LLM servers. Obsolete servers generate substantial toxic emissions if not recycled properly.

Cost to Knowledge

Erosion of expertise: Data is trained on information publicly available on the internet, from formal partnerships with third parties, and information that users or human trainers provide or generate.

Ethics: Ethical concerns highlight the lived experiences of those employed in data annotation and content moderation of text, images and video to remove toxic content.

Misinformation: Indiscriminate data scraping from blogs, social media, and news sites, coupled with text entered by users of LLMs, can result in ‘regurgitation’ of personal data, AI slop, hallucinations and deepfakes.

Bias: Algorithmic bias and discrimination occurs when LLMs inherit social patterns, perpetuating stereotypes relating to gender, race, disability and protected characteristics

Cost to Future Jobs

Job displacement: GenAI is “reshaping industries and tasks across all sectors”, driving business transformation, replacing rather than augmenting human work.

Job matching: Increased use of AI in recruitment and by jobseekers creates risks that GenAI is misrepresenting skills.

New skills: Reskilling and upskilling in AI and big data tops the list of fastest-growing workplace skills. A lack of opportunity to do so can lead to increased unemployment and inequality.

Wage suppression: Workers with skills that enable them to use AI may see their productivity and wages increase, whereas others may see their wages decrease.

Ways Forward?

We can only develop GenAI literacy by actively involving our student users. In previous publications and webinars, we have argued that institutions/faculties should establish ‘collaborate sandpits’ offering opportunities for discussion and ‘co-creation’ and we will repeat that argument with one important caveat – this is not just another committee!

Staff and students need space so that they can contribute to debates on what we really mean by ‘responsible use of GenAI’ and develop procedures to ensure responsible use. And they can see and experiment with different technologies to appreciate the variety of approaches that is now emerging. For example, we can see different strategies emerging from Microsoft and Google on the future technical development of GenAI. But what does this mean for our students’ AI literacy if the institution is committed to a single technical infrastructure? And how do students get to know about developments with strong implications for practical application such as Open-Source software, or the relative advantages/disadvantages of Small Language Models (SLMs) in comparison to the Large Language Models we are now familiar with?

We all need to develop a realistic perspective on GenAI’s likely development. The pace of technical change (and some rather secretive corporate habits) makes this very challenging for individuals, so we need proactive and co-ordinated approaches by course/programme teams. The practical implications of this discussion is that we all need to develop a much broader understanding of GenAI than a simple ‘press this button’ approach.

References

Beckingham, S. and Hartley, P., (2025). In search of ‘Responsible’ Generative AI (GenAI). In: Doolan M.A. and Ritchie, L. eds. Transforming teaching excellence: Future proofing education for all. Leading Global Excellence in Pedagogy, Volume 3. UK: IFNTF Publishing. ISBN 978-1-7393772-2-9 (ebook).

About the Authors: AdvanceHE – Working to advance Higher Education